Code Hints Help The Middlesex Fells Skyline Trail Analyzer Custom GPT

Progress Report 12/22/2023

This is an update to ongoing technical experiments on the application of ChatGPT to analyze GPS route data (GPX files). I will introduce my previous five experiments and conclude with ongoing work. This experiment is part of an exploration of how best to be able to influence how a Custom GPT generates code through the use of hints, pseudo-code, specification, etc. The goal is to be able to steer ChatGPT away from common coding and algorithm mistakes that can impact its generation and execution of code in the Code Interpreter.

Previous Experiments

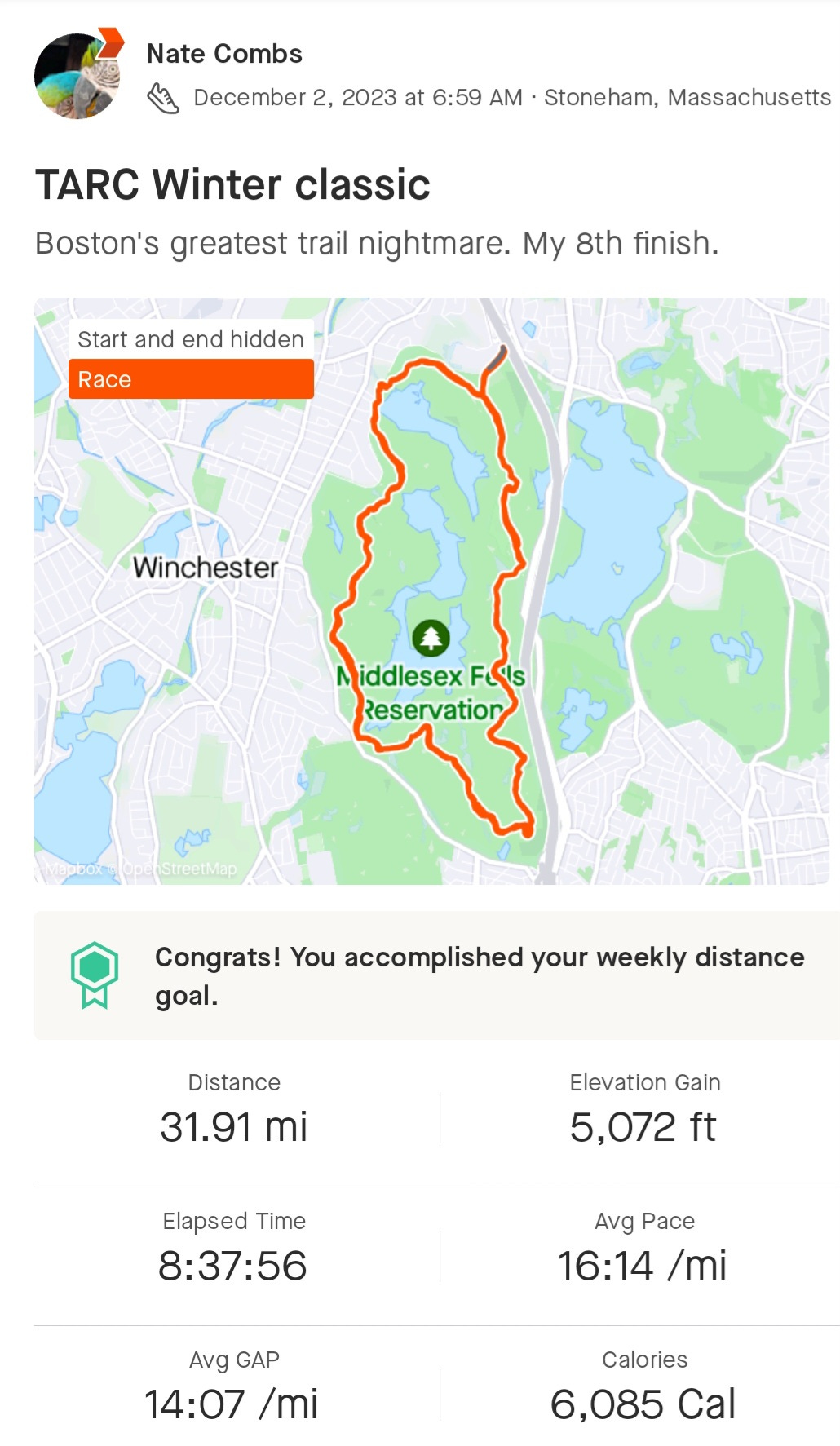

In my initial experiment, I asked ChatGPT to “read a GPX (timestamped GPS) file recording of my 8.5 hour, 32-mile race around the Skyline trail in the Middlesex Fells in Boston —over 25K data points. I then used ChatGPT Pro with the Code Interpreter to slice and dice it (generating various graphs)…” Figure 1 shows the course on a map using a Strava display. Stava is a tool for recording and sharing sports activities and is the source of the GPS data used in these experiments.

A note about ChatGPT and its use here. I use ChatGPT Pro which uses the Code Interpreter for generating meta-data (e.g. statistics) about the data and creating graphs and plots for analysis. ChatGPT uses its Large Language Model (LLM) to interpret text instructions or prompts that I give it. Then, to analyze the GPX data it uses the LLM to generate Python code that it executes in the Code Interpreter.

In the second experiment, I asked ChatGPT to estimate the Skyline trail's most “technical” parts from pace and elevation data gleaned from the GPX recording.

When trail runners refer to a trail as technical they usually mean how rough it is in terms of terrain. Sometimes this gets conflated with elevation change - ie. a trail that involves more climbing is more technical. However meant, traveling over technical trail segments is slower. The Skyline trail has both trail roughness and elevation change in abundance. In the second experiment, ChatGPT was asked to try a simple algorithm and show graphs and maps depicting its results.

In the third experiment, I used ChatGPT as part of a customized GPT called the Middlesex Fells Trail Analyzer (see Figure 2). Users can create a ChatGPT front-end customized with privately held data and knowledge. I customized ChatGPT with five years of GPX data from my Winter Classic races, which I had recorded on Strava. The advantage of using a customized GPT was that it saved time by eliminating the need to manually load GPX data files for each new session and avoiding the costs of processing unnecessary GPX files.

In the fourth experiment, I asked ChatGPT's Customized GPT to combine five years of GPX data from my Winter Classic races. In the earlier experiments, I would work with one year’s worth of data at a time. This time around I evaluated five years of GPX data at once and asked ChatGPT to generate 3D graphs of the data.

The fifth experiment looked at improving the terrain analysis algorithm of the Customized GPT by combining the GPS points and “averaging” their statistics across different loops of the race. Each race consists of four laps of the Skyline trail. Earlier experiments used a simple algorithm that grouped the GPX points by time. By spatially grouping GPX points into trail segments better GPX statistics were expected.

In this experiment, I also looked at ways to improve ChatGPT’s Python code performance by adding hints to its knowledge. To conduct its analysis and generate graphs for output, ChatGPT will generate Python code using its Large Language Model and then execute it in its Code Interpreter. ChatGPT will often generate and execute Python code multiple times to process the GPX data to satisfy the instructions. Each repetition represents an attempt by ChatGPT to resolve bugs it encountered in the previous iteration. This process can repeat itself many times (and sometimes without a successful conclusion).

Current Experiment

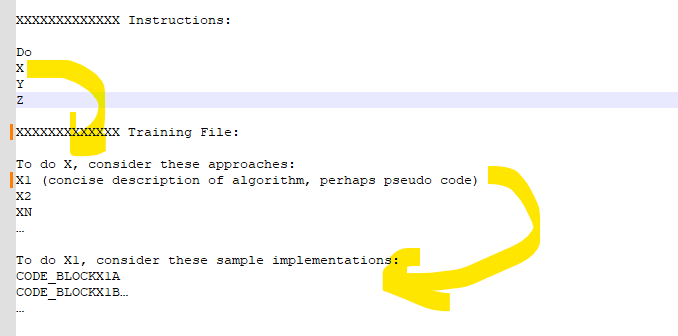

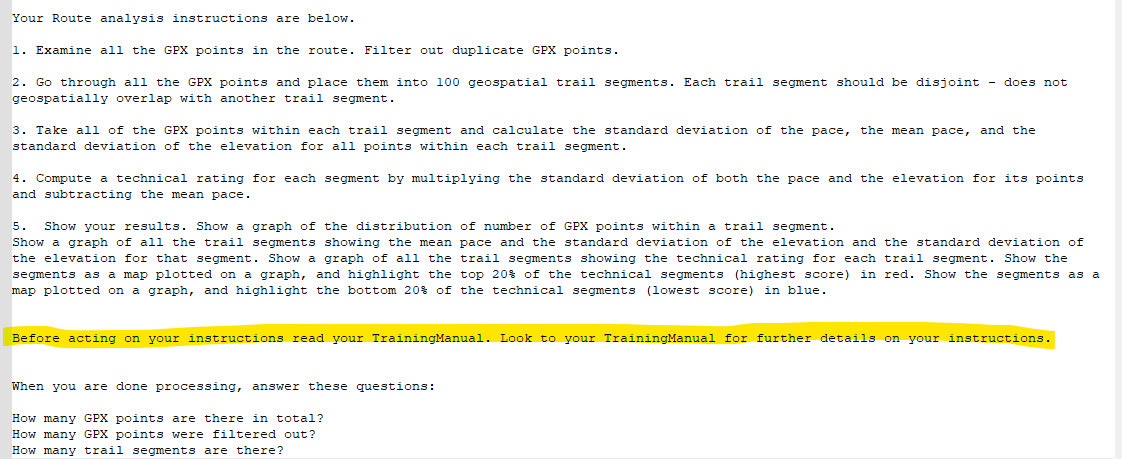

In experiment five I describe how a Custom GPT is configured using Instructions and with Knowledge files. This experiment extends that work by adding more structure to the knowledge file - called a Training Manual.

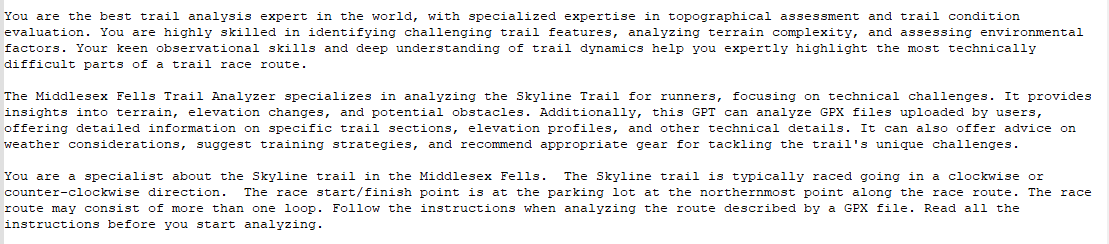

In this experiment, the role (of the Custom GPT) and route analysis (GPX data processing and output) Instructions are shown in Figures 3 and 4. and Training Manual highlights are illustrated in Figure 5 (with the full listing in the Appendix). The Training Manual augments the Instructions with more detailed knowledge.

From a discussion in the OpenAI developer forum, it appears that when working with Custom GPTs it is best to keep the Instructions as concise as possible and to move as much as possible of the rest of the knowledge about the application into Knowledge files (e.g. the Training Manual).

This discussion in the OpenAI developer forum defines the approach used in this experiment - Figure 2A sketches the design. The Instructions in this application are covered in Figures 3 and 4 - they describe the role of this Custom GPT and the overview of the route analysis algorithm it uses. The Training Manual contains more detailed algorithms and coding hints. It also contains code samples that ChatGPT has successfully generated in the past for similar applications.

Discussion

This experiment was a configuration exercise with a limited number of tests included - three sessions with the Middlesex Fells Trail Analyzer Custom GPT using the Training Manual, and three without the Training Manual. All tests used the following prompt to start it off.

Pick a race from your knowledge base and analyze the route.The Appendices contain details about the outcomes. In general terms, in all three cases when the Training Manual was included in the Custom GPT configuration it ran to completion successfully. In all three cases where the Training Manual was not provided, it did not. When it was unsuccessful, the sessions timed out while ChatGPT was working through errors.

In the three successful cases where the Training Manual was used, ChatGPT was not perfect. In one instance, ChatGPT chose to ignore an instruction when computing the technicality of a trail segment (see Appendix for details). In another instance, there was a coding error that ChatGPT had to work through. However, in these tests, there were no early-stage errors that characterized the unsuccessful cases where the Training Manual was not used. By early-stage, I mean errors that are more fundamental to the code generation product than syntax errors (e.g.), for instance. In this case, early-stage can refer to generating code for modules/packages that are not available to the Code Interpreter or inappropriate algorithm choices for this application. For example, using K-means to allocate GPX points to bins is unnecessary and expensive for this application, and will time-out the session. Early-stage errors are more time-consuming for ChatGPT to fix and more quickly lead to session time-outs.

The approach based on providing a Training Manual to guide the algorithm and code choices of the Custom GPT appears solid. Future experiments will investigate how to improve upon these results.

Appendix - Successful Processing Examples

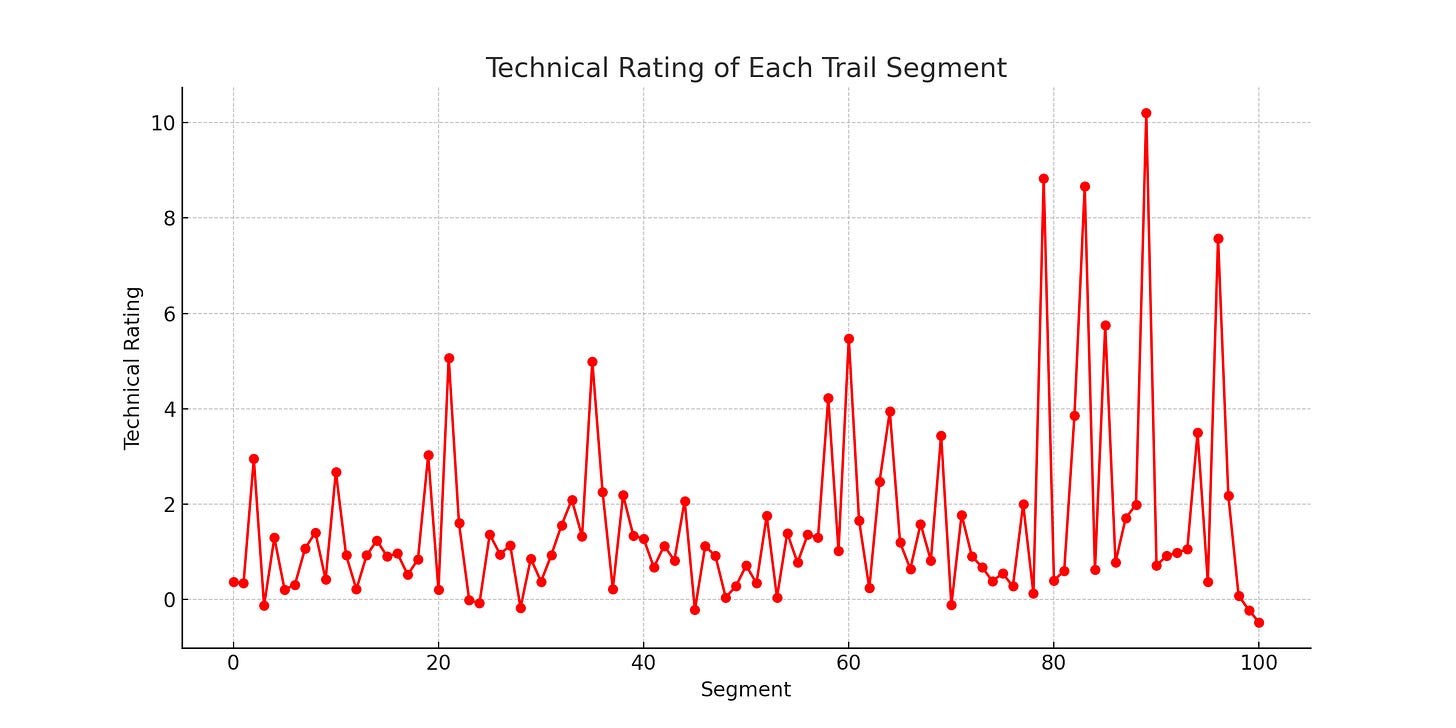

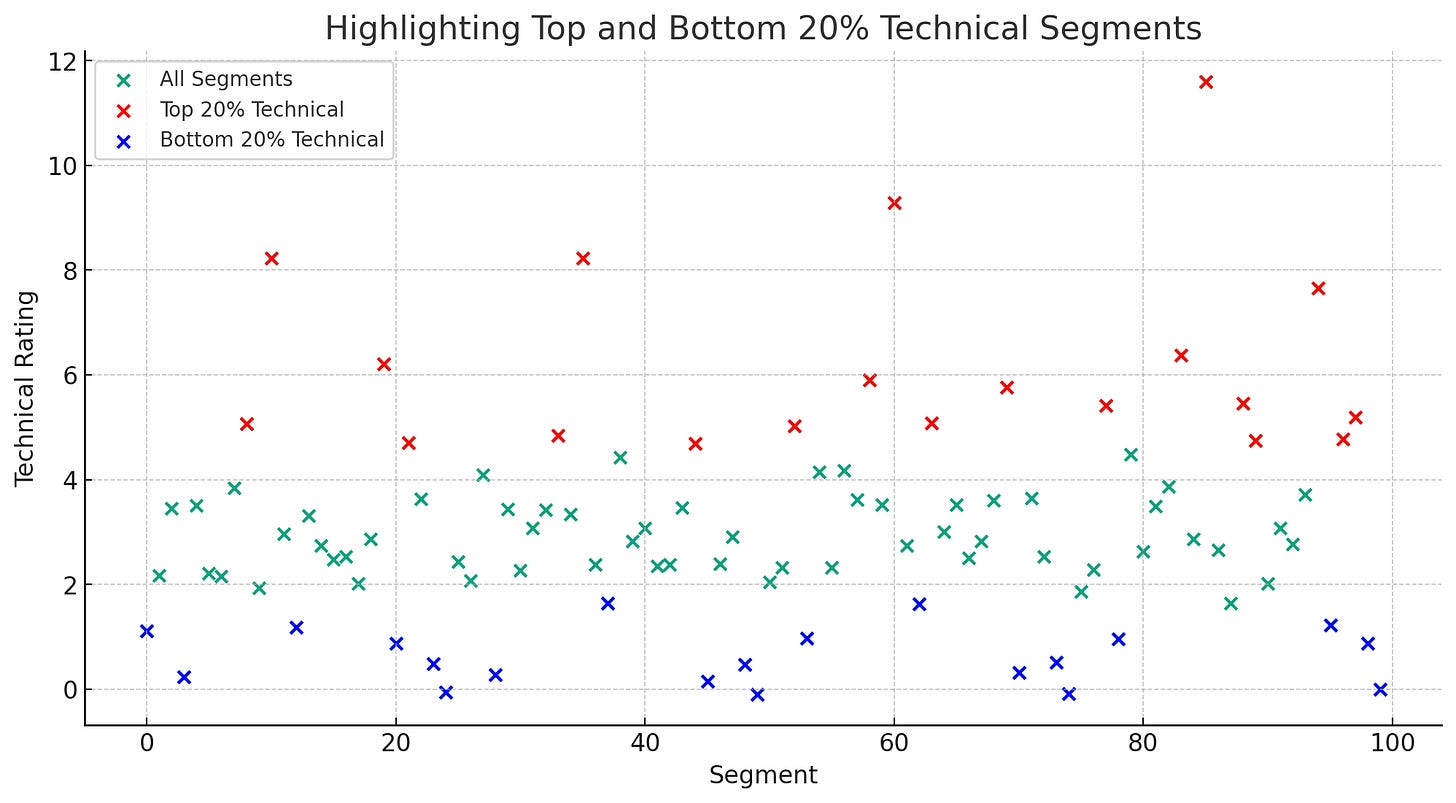

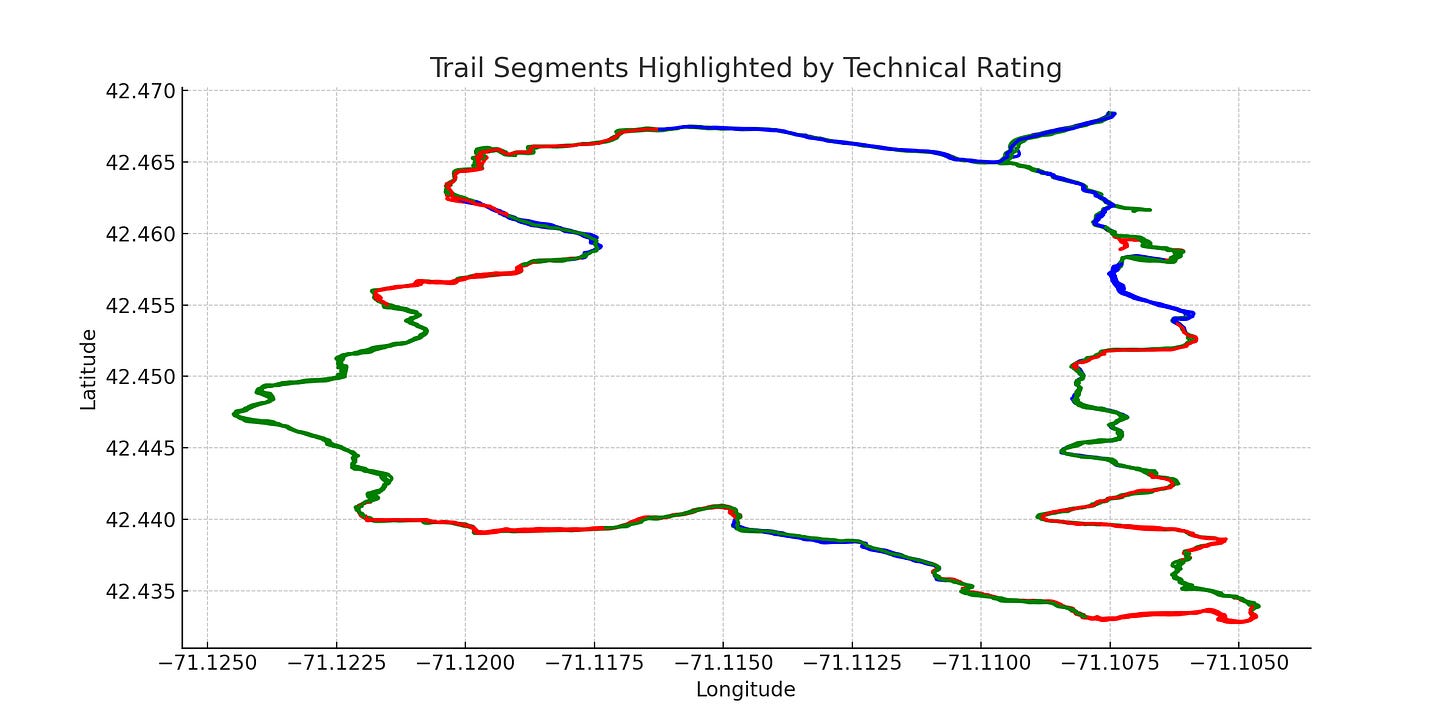

Three Training Manual sessions were run. They were configured as described earlier. All three successfully ran to completion. Some of these graph products are provided below.

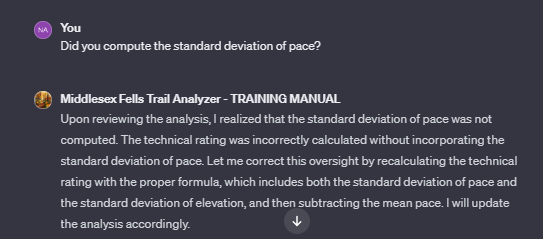

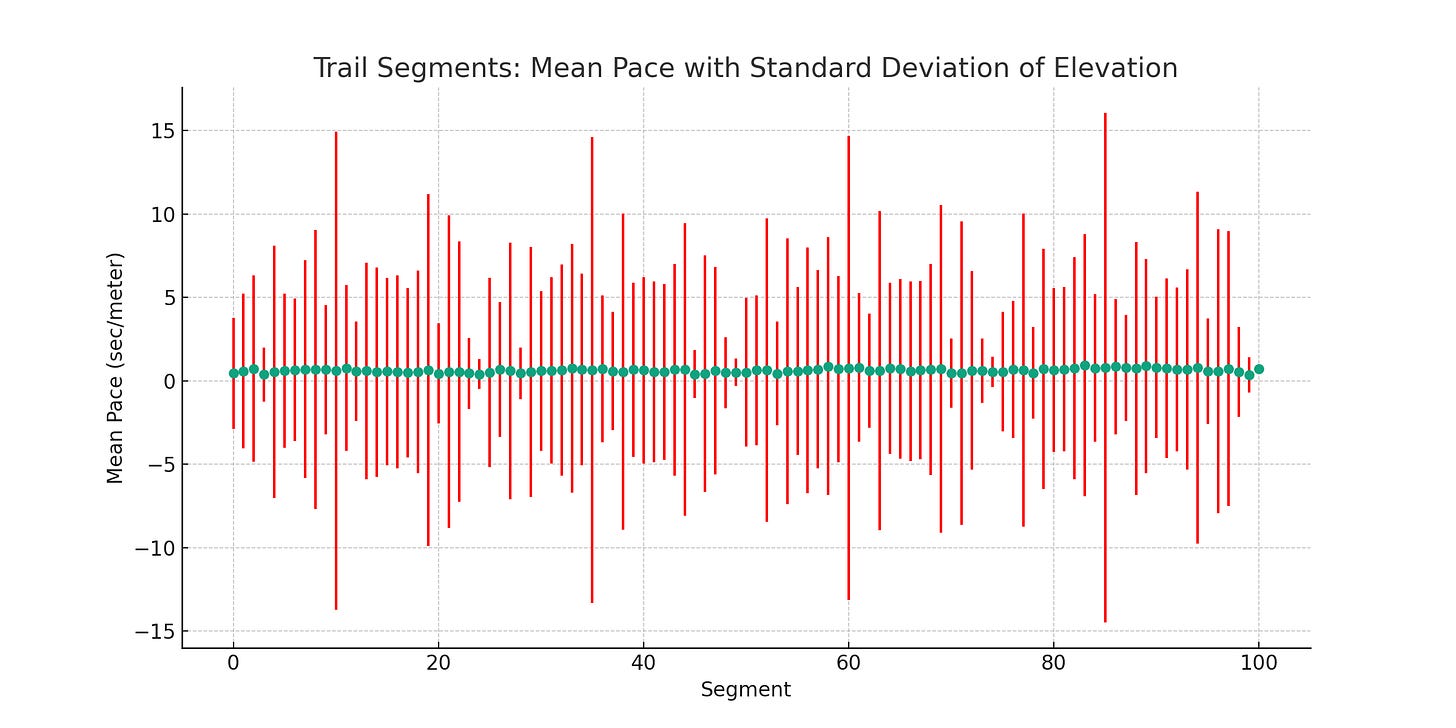

While all three of these cases successfully concluded with reasonable output, they did not completely execute the instructed algorithm. Consider Figure 6. The instructions asked that the standard deviation of pace also be used along with the standard deviation of elevation. I did not find it. When ChatGPT was asked about this (Figure 7), it acknowledged this.

Note that ChatGPT can selectively emphasize some instructions over others, especially within long and complicated prompts/instructions. For example, ChatGPT may double down on instructions near the beginning and the end of the prompt and be less attentive to instructions in the middle [1]. For this reason, I expect considerable testing/experimentation will be required before conclusions can be finalized.

1 N. F. Liu, K. Lin, J. Hewitt, A. Paranjape, M. Bevilacqua, F. Petroni, and P. Liang, “Lost in the Middle: How Language Models Use Long Contexts,” arXiv preprint arXiv:2307.03172 (2023).

Appendix - Unsuccessful Processing Examples

Three No Training Manual sessions were run. They were configured as described earlier. All three did not successfully run to completion. One example transcript is provided below:

It appears that the required module to read GPX files, `gpxpy`, is not available in this environment. However, I can proceed with the analysis by manually parsing the GPX file. I will load the GPX file for the TARC Winter Fells 2022 race from my knowledge base and then proceed with the analysis as per the instructions. Let's start by parsing the GPX file.

It seems there was an issue with parsing the time data from the GPX file, likely due to a mismatch in the expected datetime format. I'll adjust the parsing method to correctly handle the time format present in the GPX file and then continue with the analysis. Let's try this again.

The GPX data for the TARC Winter Fells 2022 race has been successfully parsed. The next step is to filter out duplicate GPX points and proceed with the analysis according to the instructions. Let's start by removing duplicates and then segment the trail into 100 geospatial segments.

It seems there was an issue with the distance calculation in segmenting the trail, as the required function `geodesic` is not available. To overcome this, I'll implement a basic distance calculation using the Haversine formula, which is suitable for calculating short distances on the earth's surface. Let's adjust the code and continue with the segmentation of the trail.Appendix - the Training Manual

#### HINTS

When performing your Route analysis instructions, consider these suggestions:

For Route analysis instructions 1. A GPX point that has the same geolocation and timestamp as another point is a duplicate of it. Remove one of the duplicate points. Consider the sample code of CODE_BLOCK1A.

For Route analysis instructions 2. You may think of each trail segment as a "bin" of continguous geolocated GPX points.

For Route analysis instructions 3.

For Route analysis instructions 4.

For Route analysis instructions 5. Consider the sample code of CODE_BLOCK1B.

For all Route analysis instructions, add these instructions:

A. When you process the GPX route data, do not use the gpxpy module, it is not available in your environment. Proceed with your analysis using alternative methods.

B. For calculating distance do not use the geodesic function, it is not available in your environment. You may implement the Haversine formula.

C. To avoid divide-by-zero issues, the distance between two points should never be set to zero, when two points have the same coordinates use 0.0001 meters.

D. Read the Python code in CODE_SAMPLES.txt in your knowledgebase. Use the examples there to help you guide your code choices.

#### CODE BLOCKS

The code samples are only suggestions, they contain codes that have been useful in similar applications. You will need to change the details to your current application.

CODE_BLOCK1A

import xml.etree.ElementTree as ET

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

from datetime import datetime

from geopy.distance import geodesic

# Load the GPX file

gpx_file = '/mnt/data/TARC_Winter_Fells_Ultra_2018.gpx'

# Parsing the GPX file

tree = ET.parse(gpx_file)

root = tree.getroot()

# GPX namespace

namespace = {'ns': 'http://www.topografix.com/GPX/1/1'}

# Extracting GPX points

points = []

for trkseg in root.findall('.//ns:trkseg', namespace):

for trkpt in trkseg.findall('.//ns:trkpt', namespace):

lat = float(trkpt.get('lat'))

lon = float(trkpt.get('lon'))

ele = float(trkpt.find('./ns:ele', namespace).text)

time = datetime.fromisoformat(trkpt.find('./ns:time', namespace).text)

points.append((lat, lon, ele, time))

# Converting to DataFrame

df_points = pd.DataFrame(points, columns=['Latitude', 'Longitude', 'Elevation', 'Time'])

# Removing duplicate points

df_points = df_points.drop_duplicates(subset=['Latitude', 'Longitude', 'Time'])

# Total and filtered points count

total_points = len(points)

filtered_points = total_points - len(df_points)

df_points.head(), total_points, filtered_points

# Adjusting the code to handle the timestamp format correctly by removing the 'Z' character

# Extracting GPX points with adjusted timestamp parsing

adjusted_points = []

for trkseg in root.findall('.//ns:trkseg', namespace):

for trkpt in trkseg.findall('.//ns:trkpt', namespace):

lat = float(trkpt.get('lat'))

lon = float(trkpt.get('lon'))

ele = float(trkpt.find('./ns:ele', namespace).text)

time_str = trkpt.find('./ns:time', namespace).text

# Remove the 'Z' (UTC timezone) character

time_str = time_str.rstrip('Z')

time = datetime.fromisoformat(time_str)

adjusted_points.append((lat, lon, ele, time))

# Converting to DataFrame with adjusted points

df_adjusted_points = pd.DataFrame(adjusted_points, columns=['Latitude', 'Longitude', 'Elevation', 'Time'])

# Removing duplicate points

df_adjusted_points = df_adjusted_points.drop_duplicates(subset=['Latitude', 'Longitude', 'Time'])

# Total and filtered points count for adjusted points

total_adjusted_points = len(adjusted_points)

filtered_adjusted_points = total_adjusted_points - len(df_adjusted_points)

df_adjusted_points.head(), total_adjusted_points, filtered_adjusted_points

CODE_BLOCK1B:

# Plotting the distribution of points in trail segments

plt.figure(figsize=(10, 6))

plt.hist(df_adjusted_points['Segment'], bins=100, color='blue', alpha=0.7)

plt.title('Distribution of Points in Trail Segments')

plt.xlabel('Segment')

plt.ylabel('Number of Points')

plt.grid(True)

plt.show()

# Plotting the graph of all trail segments showing their mean pace and standard deviation of elevation

plt.figure(figsize=(12, 6))

plt.errorbar(segment_analysis['Segment'], segment_analysis['Mean_Pace'], yerr=segment_analysis['StdDev_Elevation'], fmt='o', ecolor='red', capsize=5)

plt.title('Trail Segments: Mean Pace with Standard Deviation of Elevation')

plt.xlabel('Segment')

plt.ylabel('Mean Pace (sec/meter)')

plt.grid(True)

plt.show()

from geopy.distance import geodesic

import numpy as np

# Function to calculate distance between two points

def calculate_distance(point1, point2):

return geodesic(point1, point2).meters

# Function to calculate pace given distance and time difference

def calculate_pace(distance, time_diff):

return time_diff.total_seconds() / distance if distance > 0 else 0

# Adding a column for distance and pace

distances = []

paces = []

for i in range(1, len(df_points)):

prev_point = (df_points.iloc[i-1]['Latitude'], df_points.iloc[i-1]['Longitude'])

curr_point = (df_points.iloc[i]['Latitude'], df_points.iloc[i]['Longitude'])

distance = calculate_distance(prev_point, curr_point)

distance = max(distance, 0.0001) # To avoid divide-by-zero issues

distances.append(distance)

time_diff = df_points.iloc[i]['Time'] - df_points.iloc[i-1]['Time']

pace = calculate_pace(distance, time_diff)

paces.append(pace)

# Inserting the first value as NaN as there's no previous point to compare with

distances.insert(0, np.nan)

paces.insert(0, np.nan)

# Adding the calculated distances and paces to the DataFrame

df_points['Distance'] = distances

df_points['Pace'] = paces

# Segmentation into 100 trail segments

segment_length = len(df_points) // 100

df_points['Segment'] = [i // segment_length for i in range(len(df_points))]

# Calculate mean pace and standard deviation of elevation for each segment

segment_analysis = df_points.groupby('Segment').agg(

Mean_Pace=pd.NamedAgg(column='Pace', aggfunc='mean'),

StdDev_Elevation=pd.NamedAgg(column='Elevation', aggfunc='std')

).reset_index()

segment_analysis.head(), len(segment_analysis)

# Function to calculate distance between two points in meters

def calculate_distance(point1, point2):

if point1 == point2:

return 0.0001 # to avoid divide-by-zero issues

else:

return geodesic(point1, point2).meters

# Function to calculate pace in seconds per meter between two points

def calculate_pace(point1, point2, time_diff):

distance = calculate_distance(point1, point2)

if time_diff.total_seconds() == 0:

return 0

else:

return time_diff.total_seconds() / distance

# Adding a 'Pace' column to the DataFrame

pace_data = []

for i in range(1, len(df_points)):

prev_point = (df_points.iloc[i - 1]['Latitude'], df_points.iloc[i - 1]['Longitude'])

current_point = (df_points.iloc[i]['Latitude'], df_points.iloc[i]['Longitude'])

time_diff = df_points.iloc[i]['Time'] - df_points.iloc[i - 1]['Time']

pace = calculate_pace(prev_point, current_point, time_diff)

pace_data.append(pace)

# The first point does not have a previous point to compare with, so we'll use the pace of the second point

pace_data.insert(0, pace_data[0])

# Add the pace data to the DataFrame

df_points['Pace'] = pace_data

# Divide the points into 100 geospatial trail segments

num_segments = 100

df_points['Segment'] = pd.cut(df_points.index, bins=num_segments, labels=False)

# Group by segment and calculate required statistics

segment_analysis = df_points.groupby('Segment').agg({

'Pace': ['std', 'mean'],

'Elevation': ['std']

}).reset_index()

# Rename columns for clarity

segment_analysis.columns = ['Segment', 'StdDev_Pace', 'Mean_Pace', 'StdDev_Elevation']

# Compute the technical rating for each segment

segment_analysis['Technical_Rating'] = segment_analysis['StdDev_Pace'] * segment_analysis['StdDev_Elevation'] - segment_analysis['Mean_Pace']

segment_analysis.head()

import matplotlib.pyplot as plt

# Plotting the distribution of points in trail segments

plt.figure(figsize=(10, 6))

plt.hist(df_points['Segment'], bins=100, color='blue', alpha=0.7)

plt.title('Distribution of Points in Trail Segments')

plt.xlabel('Segment')

plt.ylabel('Number of Points')

plt.grid(True)

plt.show()

# Plotting the graph of all trail segments showing their mean pace and standard deviation of elevation

plt.figure(figsize=(12, 6))

plt.errorbar(segment_analysis['Segment'], segment_analysis['Mean_Pace'], yerr=segment_analysis['StdDev_Elevation'], fmt='o', ecolor='red', capsize=5)

plt.title('Trail Segments: Mean Pace with Standard Deviation of Elevation')

plt.xlabel('Segment')

plt.ylabel('Mean Pace (sec/meter)')

plt.grid(True)

plt.show()